Hello all, I have been trying to implement ORB_SLAM2 using kinect in ROS. I have cloned the source code of ORB_SLAM from github.

Now I am confused on how to launch it with kinect. Can anyone suggest me a way?

Hi raktim,

This package looks very promising  but I will not be able to help you if you do not tell me what the problem is. maybe let’s start from the beginning: You are able to run the depth sensor from your kinect and show its result, for example in Rviz? And which version of kinect do you have?

but I will not be able to help you if you do not tell me what the problem is. maybe let’s start from the beginning: You are able to run the depth sensor from your kinect and show its result, for example in Rviz? And which version of kinect do you have?

Regards,

Hubert.

No sir, I have not been able to run depth sensors and show its results.

I am using the xbox360 kinect and want to create a map in real time using it…

Hi raktim,

If you just starting your adventure with kinect and ROS I recomend you to look closer on rtabmap_ros package. You can find it here. On ROS wiki you can also find tutorials which should be very helpful for you on start. It’s using freenect or openni packages to get depth sensor data, so you have to remember to install it before you start using rtabmap_ros.

Regards,

Hubert.

Hey Hubert, Thanks a lot for the suggestion. Now that I’ve installed Rtab_Map and and created a map of my room using kinect, I want to do something more with it. Do you have any suggestions on it? I was thinking of checking out the loop closure detection and mapping-building efficiency in different lighting conditions. Please do comment on this.

Regards,

Raktim.

Hi raktim,

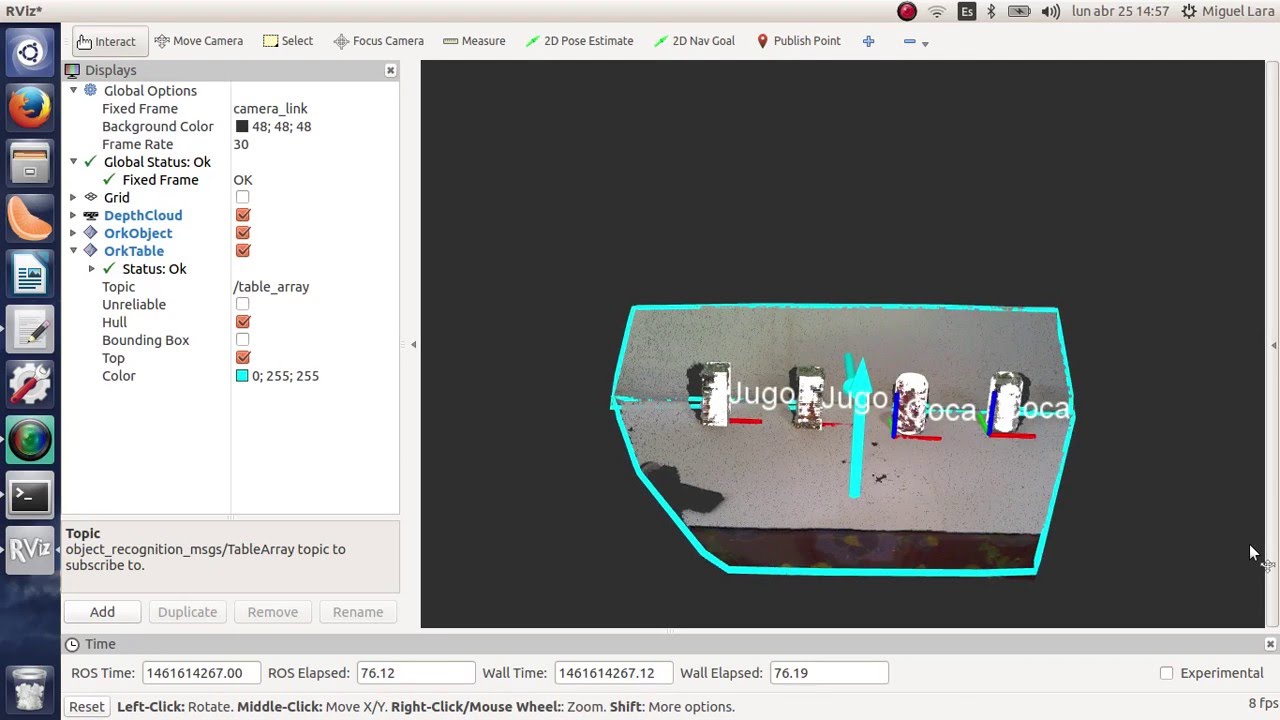

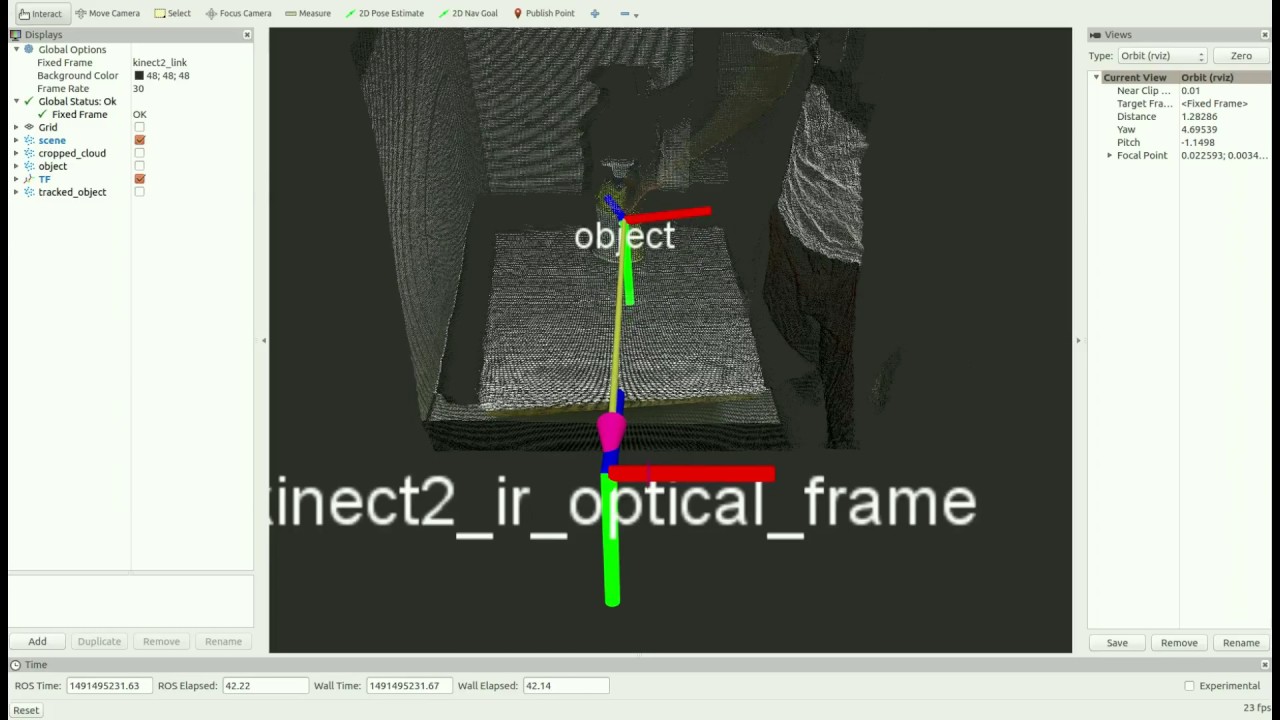

Your suggestions for further projects are very interesting but maybe you will be interested in going in a different direction. I suggest exploring the subject of object recognition and determining their location. You can find a lot of examples in internet:

or:

You can use PCL, ORK, etc. Possibilities of using these libraries are huge.

I can’t wait to get the results of your work.

Regards,

Hubert.

Hello

I am using rtabmap_ros with ROSbot’s /camera/color/image_raw, /camera/color/camera_info and /camera/depth/image_raw.

But, as a photo, depth image effect results like this and how should I do it and it is not possible directly use of camera topic of ROSbot?

Best Regards

gcold

Hi @goldcold,

It is great you have configured 3D mapping from rtabmap_ros with ROSbot 2.

I need more information about your project.

Please show the launch files and rtabmap_ros configuration.

Best regards,

JD

This is my launch for rtapmap_ros.

<param name="approx_sync" type="bool" value="true"/>

</node>

<node pkg="rtabmap_slam" type="rtabmap" name="rtabmap" output="screen" args="$(arg rtabmap_args)">

<param name="database_path" type="string" value="$(arg database_path)"/>

<param name="frame_id" type="string" value="base_link"/>

<param name="map_frame_id" type="string" value="map"/>

<param name="odom_frame_id" type="string" value=""/>

<param name="wait_for_transform" type="bool" value="true"/>

<param name="subscribe_depth" type="bool" value="false"/>

<param name="subscribe_scan" type="bool" value="true"/>

<param name="subscribe_stereo" type="bool" value="false"/>

<param name="subscribe_rgbd" type="bool" value="true"/>

<param name="subscribe_odom" type="bool" value="true"/>

<param name="subscribe_rgb" type="bool" value="false"/>

<!-- use actionlib to send goals to move_base -->

<!--param name="use_action_for_goal" type="bool" value="true"/>

<remap from="move_base" to="/move_base"/-->

<!-- inputs -->

<remap from="scan" to="/scan"/>

<remap from="odom" to="/odom"/>

<remap from="rgbd_image" to="rgbd_image"/>

<!-- output -->

<!--remap from="grid_map" to="/map"/-->

<!-- RTAB-Map's parameters -->

<param name="Reg/Strategy" type="string" value="1"/> <!-- 0=Visual, 1=ICP, 2=Visual+ICP -->

<param name="Reg/Force3DoF" type="string" value="true"/> <!-- 2D SLAM -->

<param name="GridGlobal/MinSize" type="string" value="20"/>

<!-- Do also proximity detection by space by merging close scans together. -->

<param name="RGBD/ProximityPathMaxNeighbors" type="string" value="10"/>

<param name="Grid/Sensor" type="string" value="0"/>

<!-- localization mode -->

<param if="$(arg localization)" name="Mem/IncrementalMemory" type="string" value="false"/>

<param unless="$(arg localization)" name="Mem/IncrementalMemory" type="string" value="true"/>

</node>

<!-- visualization with rtabmap_viz -->

<!--node if="$(arg rtabmap_viz)" pkg="rtabmap_viz" type="rtabmap_viz" name="rtabmap_viz" args="-d $(find rtabmap_demos)/launch/config/rgbd_gui.ini" output="screen">

<param name="frame_id" type="string" value="base_footprint"/>

<param name="approx_sync" type="bool" value="true"/>

<remap from="odom" to="/odom"/>

<remap from="scan" to="/scan"/>

</node>-->

Btw, is there any complexity with new ROS_noetic OS?Because around five times with new OS, I lost my wifi driver after sudo apt upgrade.

Best Regard

gcold

Hello @goldcold,

we are aware of this bug sometimes occurring in a new Noetic ROS image after the sudo apt upgrade command. At this point, I recommend reinstalling the system image.

Best regards

Jan Brzyk

Yes, I don’t understand why do new version of kernel 5.4.0.1095-raspi miss cfg8821 for wireless.