by using the ROSbot 2.0 controlled in movement (direction and twist) by a PC software, after a while, one can see that:

Warning: Invalid argument passed to canTransform argument source_frame in tf2 frame_ids cannot be empty

at line 126 in /tmp/binarydeb/ros-melodic-tf2-0.6.5/src/buffer_core.cpp

After that, the movement is not working anymore.

But the bot is still controllable.

To fix that: one has to reboot the bot.

When: This happened here after about 1 minutes after start of program.

Remarks: With the old EKF and Firmware, I did not see that at all (also after hours remote controlling the rotobt.

Additionally: After starting the core and ekf, one can see this message also very often.

Hi matthias.

We will have to take a deeper look into this problem, but your observations are very valuable. I really thank you for the time spent on checking what is going on and narrowing down the possible amount of issues.

My initial guess is that the /odom/wheels topics is either not published or kalman filter ignores it.

As I said in the first place. We will investigate it and help you as soon as possible.

Best regards,

Krzysztof Wojciechowski.

I have also this message:

[ WARN] [1648631226.772251067]: Could not obtain transform from to odom. Error was Invalid argument passed to lookupTransform argument source_frame in tf2 frame_ids cannot be empty

[ WARN] [1648631226.776708998]: Failed to meet update rate! Took 0.15147158400000002021

[ WARN] [1648631226.779171886]: Failed to meet update rate! Took 0.10397268000000001176

Maybe it is related to the other?

Hi matthias

Yup. This seems like what I wrote previously about. Please give us some time to investigate that.

Best regards,

Krzysztof Wojciechowski.

Hi Krzysztof,

I see here a massive angle error. Could it be, that the odom problem can cause that?

I am calculating the z (yaw) angle from angle vector + w like that:

tf::Quaternion q(gOdometer.pose.pose.orientation.x,

gOdometer.pose.pose.orientation.y,

gOdometer.pose.pose.orientation.z,

gOdometer.pose.pose.orientation.w);

tf::Matrix3x3 m(q);

double roll, pitch, yaw;

m.getRPY(roll, pitch, yaw);

if (isnan(yaw)) {

yaw = 0;

}

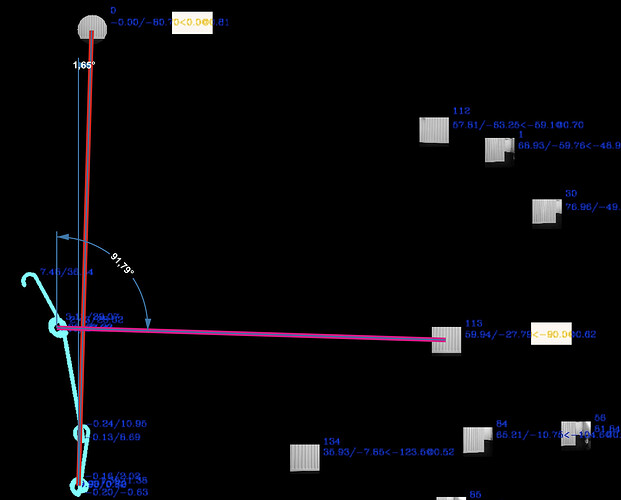

If the robot sees the wall heating for the first time, it uses the angle 0 (red line on image).

If it sees the wall heating for the 4th time, it uses 90 degrees (pink line on image).

Could this massive error come from this odom problem?

The blue “line” is the robot movement. It scans the room by twisting around the z axis (starting at x/y=0) and if it gets boared (it knows all parts of the room so far) it move a bit until the view is more interesting for it and starts twisting again, and so on. Actually, not the robot is doing that but the PC which remote controls the robot

EDIT:

I am doing now a yaw angle correction by myself. It turned out, that the yaw angle is around 20 degrees too big after one 360 twist. So, I am caring about my own yaw angle by added raw yaw delta values corrected by a correction factor. This leads to a almost correct angle representation in my machine learning software. The objects are now at the almost correct place in the picture.

It would be very nice of your team, if one could look into that issue too.

And, there is another one: The twist path is represented in the odometry data X and Y positions in the wrong way: My robot twists clock wise but the X/Y coordinates are sent counter clock wise. Look at the end of the path in the pciture, it is turning left, instead of right:

But the forward movements are correct. I checked all my data paths if I have a X/Y problem, but there is no. Also the objects in the room are on their correct places for which I am using also the robots X/Y position and the angle to calculate their places.

So: Why is the twist reverse then?

Hi matthias.

The problem with yaw rotation is that for skid drive, it is strongly related to the surface it’s driving on. In some cases, it is a matter of hard-coding fine-tuned parameters for a given surface. Currently our parameters were something that should be flexible enough to work on most surfaces and be correctable by AMCL. It is best to fuse odometry with IMU data in Kalman filter, so it should correct for overshoots in rotation.

If you want to have more precise localizing, you can use AMCL on premapped environment. It should correct you globally. Then your position is a simple transform between /world and /base_link.

In terms of your previous problem, we are still investigating what is going on with EKF.

Best regards,

Krzysztof Wojciechowski.

Hi matthias,

I am working on observing your issue on my robot, but I have trouble with it because it doesn’t happen in my case. Can you tell me something more, for example, about your launch, robot bringup and computing power demand in your use case? Are both Twist and Pose data published on the /odom/wheels topic? What happens to the odom-baselink transformation after some time?

My initial guess is that you can reduce the frequency of ekf package or check timeout for ekf sensors.

Please, let me know about it. If you have any questions/troubles, do not hesitate to ask.

Best regards,

Paweł